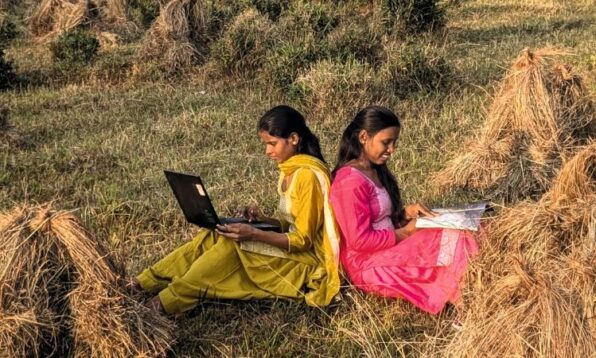

Artificial intelligence is booming in India, especially on social media. AI decides what content we watch and what gets flagged as harmful. When AI flags any content as harmful or explicit, we are saved from the trauma of watching its unfiltered violence. But did you know that somebody else soaks up that trauma to save the rest of us? And these aren’t sophisticated AI engineers; they are women in rural India who are trying their best to make a living. But why are these women choosing to watch traumatic content?

On paper, the job sounds fancy — AI trainer or content moderator, but in reality, it’s very traumatic. You have to sit for hours in front of a screen, watching and categorising graphic sexual violence and extreme abuse so that algorithms can learn what to block. Many of the people doing this work are women, drawn to it because it offers steady pay, remote flexibility, or one of the few accessible digital roles in their region. For some, it is a financial lifeline; for others, the true nature of the content only becomes clear once they are already inside the system. What looks like an easy, work-from-anywhere job turns into repeated exposure to the internet’s darkest material.

We spoke to psychiatrist Dr Era Dutta to understand the scope of how damaging this work is, and she was quite clear in her answer.

A new form of emotional labour

When we asked her if this could be categorised as a digital form of emotional labour, Dr Era agreed. Emotional labour traditionally involves managing one’s feelings to meet professional or social roles. Like in their personal lives, women are doing emotional labour online too. They are watching violent and harmful content to protect everyone else from it. “The work is invisible and under-supported,” Dr Era said. And once again, the onus of safety has fallen on women. They are responsible for making everyone’s life easy while damaging their own.

It’s not just about the digital emotional labour but also how it impacts these women and Dr Era was clear: the psychological effects of repeated exposure to graphic sexual and violent material are real, cumulative, and deeply personal.

“Constant exposure to sexual and violent content over time alters how the brain processes trauma,” explained Dr Era. The brain does not always distinguish between witnessing violence in person and witnessing it repeatedly on a screen. The nervous system simply responds to threat cues; it doesn’t matter if those threat cues are caused by what we watch on the screen. And when these threat cues become regular, your brain just makes threat the default setting.

Dr Era said that two common patterns emerge from this kind of work. “Some individuals become oversensitive to triggers, while others move towards desensitisation, such as feeling numb or detached. Both carry long-term consequences for intimacy, trust, and a sense of safety.”

Hypervigilance can make the world feel unsafe. Desensitisation affects relationships, sexual wellbeing, and even parenting. Over time, the brain recalibrates itself around danger. Repeated secondary exposure to trauma can lead to what clinicians call vicarious trauma, with symptoms that mirror post-traumatic stress.

The added toll of a rural setting

The psychological strain multiplies in rural and conservative settings where topics like sex remain taboo and mental health stays on the margins. “When individuals are not sensitised to healthy frameworks around consent, sexuality, and power dynamics, disturbing content may be processed without context,” Dr Era said. Without comprehensive sex education, trauma awareness, or access to counselling, the distress has no outlet, which is why these jobs, outsourced by big tech companies, remain a dangerous task. In fact, research shows that even in workplaces where support and interventions are present for this kind of work, the workers suffer from significant levels of secondary trauma.

The Guardian, in a report, mentioned that these content moderators watch around 800 videos and images a day, even 1,000 on some days, to train the algorithm to identify harm and violence. Dr Era says this is where the problem starts. “One of the most essential safeguards for content moderators has to be limiting their daily exposure.” She also suggests that there should be a rotation of harmful content, meaning they shouldn’t be filtering only explicit content every day. The content should keep shifting from intensely violent to something lighter. Along with this, structured breaks, confidential trauma-informed counselling, and mandatory debriefing sessions essential, according to Dr Era.

While artificial intelligence in India is changing our lives for the better, it is also ruining many women’s lives. So, if we are willing to benefit from this invisible labour, we must also be willing to acknowledge its weight and insist that those carrying it are properly protected.

If you have any mental health concerns, you can reach out to Dr Era Dutta here.

Featured Image Source

More from All About Eve

The Epstein Files: The World Is Not Angry Enough

Arjun Kapoor Was Trolled For Months Till It Wasn’t Cool Anymore: A Story Of India’s Empathy Crisis

Is The 4B Movement A Solution To India’s “Good Girl” Problem?

It’s Official, Your 20s Aren’t Your Best Years. Science Says So

Web Stories

Web Stories